An attempt to scrub the gathering moss off some stones and help them keep rolling smoothly along ... Thoughts on information technology and anything else, by Tony Austin, after a career in Science and the IT industry, and now somewhat contemplative retirement

Tuesday, November 29, 2005

Ray Ozzie's new (Microsoft) weblog

This could prove to be a real winner, if it catches on and can be made to work effortlessly -- particularly if it becomes an open standard.

If done right, SSE could provide a generic and non-proprietary form of two-way synchronization between any two sources.

I'm a big fan of the excellent Lotus Notes replication model (particularly since Release 4, when replication became sensitive to field-level changes not just document-level changes). Notes replication of course is quite proprietary, so it would be great if SSE provides a form of non-proprietary replication between foreign sources.

Ray points out the draft spec for SSE (somewhat abstruse as are all such specification documents), and Frequently Asked Questions (this is quite informative).

Friday, November 25, 2005

Collaboration competition - Microsoft versus Lotus in 2005/2006

There were a few glimpeses of Micrososft "Office 12" due late in 2006 or thereabouts. It seems that the Office underpiinngs have been significantly reworked and Office 12 will have some nice new collaborative features. Pity about having to wait another year or so before it's let loose upon us!

Meanwhile Lotus Notes/Domino 7 has shipped and IBM has the next "Hannover" release well into development. There's a lot of life left in Notes and Domino.

I've ben much closer to Lotus Notes/Domino than to the Microsoft products, so have been doing some research to increase my understanding of the features and positiong of the latter. Here are a few articles that I've looked at recently which give a nice independent summary of the Microsoft collaboration product range:

- Increase Efficiency With Collaboration [using Microsoft technologies] - September 2004

- Microsoft's Expanding Collaboration Strategy - December 2004

- Microsoft Sets Its Sights on Collaboration - October 2005

Competitoni is good for us all: it's great to see two of the big players working so hard to bring provide productivity features. If the large attendance at yeasterday's seminar is any indication -- and this was just for Melbourne, it's probably the same all around the globe -- Microsoft certainly is managing to generate considerable interest, despite the regular slippages in their product release dates.

Wednesday, November 23, 2005

What improves your skills as a developer?

Monday, November 21, 2005

Serendipitous slitherings

This led me to think back to my chemistry (industry and teaching) days, prior to getting into computing. I clearly remember one particular organic chemistry lecture about the 19th cenrury chemist Kekulé who woke up one morning from a dream in which a snake was eating its own tail. From this he deduced the structure of the benzene molecule (a.k.a. benzine), the now-familiar hexagonal ring of six carbon atoms that is the foundation of the so-called aromatic organic compounds.

This sort of thing has happened to me a couple of times before. The workings of the mind are indeed strange and wonderful. What on the surface appears serendipitous proabaly isn't so below the surface, in the nether regions of the brain where such problems are solved by some mysterious process.

I'd be interested to hear from you if anything like this has heppened to you (IT design problems, or whatever).

Wednesday, November 09, 2005

Once every 3 months?

So IDC recommends in this article: Asia-Pac firms unprepared for IT threats -- and they comment that many companies "may still be susceptible to disruptions from security breaches or natural disasters."

Which of course leads me to give you a not-so-gentle nudge! Have YOU have been carrying out such regular tests, and modifying your backup and recovery procedures to cope with changing circumstances?

UPDATE (17 November 2005):

Apani comments on a Harris Interactive poll about backup frequency: "Everyone knows that they should back up the data on their computers, but how many actually do it? When was the last time you did it? Out of the 2,300 US adults who were polled in late July of this year 685 (roughly 33%) didn’t back up at all…. And the majority of respondents who did back up, only perform this task once a year." Click here to read the article: You know you should... I hope that businesses would poll better than this! (Perhaps not too much better?)

Monday, September 19, 2005

Blissfully getting to know less about everything

So what hope do I have of understanding the deeper mysteries of the universe? My background is that of being a scientist (something of a generalist). I've been making my living from the IT industry since 1970, but I'm still fascinated by all things scientific, cosmology included.

I recall reading a special issue of Scientific American around twenty years ago. One of the articles mentioned that the universe was thought to be around 13 billion years old, while another article indicated that the universe was maybe 26 billion light years across. (I might have the figures grossly wrong, but think that the RATIO between them is correct.)

On this one matter alone, I couldn't reconcile how (or if) light could travel across the universe. Light couldn't have been traveling for 26 billion years if the age of the universe's is supposed to be only half of that. I still don't quite know what to make of this. What point about it am I missing?

Maybe it means that everything started (with the "big bang") 13 million years ago and matter has been spreading outwards (at the speed of light?) in all directions from a single point for 13 billion years, so that light NEVER will be capable of travelling right across from one side of the universe to the other. It's just one of quadzillions of things that I don't understand, so I haven't lost any sleep over it. Ah, blissful ingnorance. Now I can go back to the trivialities of the IT world with renewed vigor.

The other day I came across an interesting article: Praying in a Post-Einsteinian Universe (by David S. Toolan, S.J.) Though written some ten years ago, and lots of things cosmological have been discovered since then, it still makes fascinating reading an the relationship between proof-centric "science" and faith-centric "religion".

Such articles help me to escape for a while from the extremely limiting, introspective world of information technology. ... So if I get frustrated when I couldn't fathom out why a certain Desktop Search product encounters a file that it can't index and crashes, who cares? I just ponder some of those cosmological unkowns for a while and it brings everything back into perspective!

Here's a brief extract from Fr. Toolan's article (but you should read the whole thing):

- - - - - - - - - -

I thought of this contrast a little over a year ago, as I read in the New York Times (Oct.10, 1995, C1) that the National Aeronautics and Space Administration had just announced that its "deep space" radio telescopes in California and New Mexico had obtained the first clear images ever recorded of a star being born in the Milky Way. Wrapped in a thick cloud of gas and in-falling dust, the infant star had not yet ignited through nuclear fusion. It was also small, about half the size of our Sun, and by cosmic standards stood very close - a scarce 800 light years distant from Earth in the direction of the constellation Aquila. Such "protostars," we were told, enable scientists to understand the dynamic forces that once generated a solar system like our own. Nascent stars, in fact, are a cosmic commonplace. As the Times reporter put it, "While other stars are aging and collapsing in death, with a bang or a whimper, the universe is always replenishing itself with new stars."

That new star, of course, will be only one of 50 to 100 billion in the Milky Way. Nothing special. Moreover, another article in the Times a few months later (Jan.15, 1996) reported that the Hubble Space Telescope's probes into far out space had revealed that the number of galaxies had just increased five-fold over the previous count. Astronomers used to think there were some 10 billion galaxies; now the number is estimated to be about 50 billion of them, extending across some 300 billion billion light years of ever-expanding time-space.

- - - - - - - - - -

Tuesday, September 13, 2005

IBM's Sametime, QuickPlace, and AS/400

This is discussed at http://www-306.ibm.com/software/swnews/swnews.nsf/n/nhan6fzfhw?OpenDocument&Site=lotus which goes on to say:

“At the 2005 Global Customer Partner Council,” he says, “our presenters received two standing ovations — one when we announced the name change back to Sametime, and one when we announced the name change back to QuickPlace. These are some of our very best customers, and we hadn’t even shown them features yet, and they stood and applauded the names.”

Marshak attributes the overwhelmingly positive reaction not so much to the old and familiar names as to what they represent to customers.“It’s really an indication that we’re listening and that we’re giving customers what they want,” he says. “They want the products to be called Sametime and QuickPlace because these names reflect the reasons they bought the products, their need for strong real-time and team collaboration within the enterprise. And they signify our continuing commitment to and investment in these products, and in the Notes/Domino platform as a whole.”So for me it was unfortunate that very well-understood name of the IBM Application System/400 (AS/400) had to be rebranded as the iSeries! (I'm using a shorthand notation here, not the official, long-winded full product names.)

- - - - - - - - - - - -

When the Application System/400 (AS/400) was announced in 1988, the clear branding message was that its prime purpose was to be an APPLICATION SYSTEM (that is, an application-delivery platform).

Yes, I know that IBM later decided to rebrand all of its four platform ranges as e-business servers, hence the eServer generic platform name (with the four platform lines: zSeries, pSeries, iSeries and zSeries), and there is a good amount of rationality in this.

But with the rebranding, unless you know the background it no longer is immediately obvious (from the name alone) that the iSeries is still primarily an application platform -- at least, I assume that it's prime purpose in life is as such.

So here's my jaunty request for IBM to change the name iSeries back to AS/400 again, for the same reason that Sametime and QuickPlace got their old names back!

Saturday, August 27, 2005

The Shadows of Giants

I find it quite fascinating, now as an independent outside observer still active in the IT industry, to watch things in a state of rapid flux.

This New York Times article published a few days ago is well worth a read:

Relax, Bill Gates; It's Google's Turn as the Villain

(you may have to register with the site prior to accessing the article).

A QUOTE FROM THIS ARTICLE:

In the 1990's, ... I.B.M. was widely perceived in Silicon Valley as a "gentle giant" that was easy to partner with while Microsoft was perceived as an "extraordinarily fearsome, competitive company wanting to be in as many businesses as possible and with the engineering talent capable of implementing effectively anything." ... Now, Microsoft is becoming I.B.M. and Google is becoming Microsoft.

Friday, August 26, 2005

Me and My "Shadow"

Well have I got news for you. Christmas has come early this year.

If you're quick enough and go to https://secure.ntius.com/esdsoft/ntispecial08152005_shadow_v2.asp?xID=885&OID=3066 you can purchase NTI Shadow™ 2 for Windows for only US $0.99 -- that's right, for only 99 cents!

I now have my very own inexpensive copy of Shadow. It has been up and running for a few days, periodically copying critical files onto a separate backup drive. The Windows XP system performance overheads seem negligible.

Therefore I give NTI Shadow 2.0 a rating of "Highly recommended" (and, in fact, would have done so even at the regular full price).

The product launch special offer can't last forever -- the regular price will be US $29.99 -- so part with the 99 cents and get in while the going's good.

UPDATE (08 September 2005): I've been using Shadow for a couple of weeks now, and have found it easy to set up backup tasks as well as running unobtrusively in the background. And here's a review that I just discovered that covers Shadow pretty well.

NetBeans Collaboration Service

So it was with great interest that I just read Charles Ditzel's recent blog entry NetBeans Collaboration Project : Collablets and Code-Aware Tools for Sharing which explains how the NetBeans Collaboration Project "allows remote sharing of code, code reviews and walk-throughs among many remote developers that may even choose to use a collablet VOIP feature to augment their work, as well as code-aware instant messaging tools."

This looks like an outstanding capability to be built right into a development tool.

There's even a free NetBeans Collaboration Service for the NetBeans community... "The Developer Collaboration feature in NetBeans 4.1 IDE allows you to connect to a collaboration server. With this feature, you can engage with other developers in conversations, wherever they are located in the next room or across the continent. You can also share your projects and files in real time, allowing others in the conversation to make changes, which are presented to the rest of the group in visual cues."

AN ASIDE:

I like Eclipse for what it can do extremely well, and I also like NetBeans very much. My approach is not to be a one-eyed finatic about any single tool, but to have a kitbag containing multiple tools: it's a matter of "Horses for courses" or "Use the best tool for the job in hand." We all benefit from variety, innovation and competition!

Sunday, August 21, 2005

Lotus Notes Nostalgia #1

It's a demo titled Discover Lotus Notes - "The Fastest Way to a Responsive Organization" and it was originally distributed by Lotus on diskette, way back in Release 3 times in 1993 or 1994, when the server component was still called the "Lotus Notes Server" (later rebranded as the "Lotus Domino Server" at Release 4.5).

Download the demo file (less than 0.9 MB) from here or from here.

Next, unzip the file contents to a folder and copy the files onto a diskette. Then open a DOS command window, then run INSTALL.COM from the diskette drive to launch the demo.

All I can say is that it should work. But to be honest I haven't tried it for a year or two when I came across it lurking in my archives. If it does work: Enjoy!

Don't ask me why, but it won't run other than from a diskette. It expects the INSTALL.COM to be on the A: or B: drive. If you find any other way of running the demo, please let me know. Maybe there's a way of "tricking" Windows to run the demo directly from your hard drive, via the SUBST command or similar, but I've long forgotten all that DOS stuff!

Friday, August 19, 2005

Panic -- 42 is NOT the answer!

I've been struggling recently with how to manage and retrieve information from my work system. It's an AMD Athlon 64-bit notebook with 1 GB of memory and a built-in 80GB hard drive plus three additional external USB-connected 80GB drives. (Total cost for this was around Australian $4000. Contrast this with, say, the initial model of the IBM System/3 introduced in late 1969, which with max hard disk of 10 MB and max memory of 16 KB would in today's dollar terms would have cost maybe 100 times as much for a tiny fraction of the processing power.)

Stored on the various drives are backups and software distributions and tens of GB of documentation in various forms such as PDF and HTML files. I'm in the middle of testing various Windows-based desktop search products to see how they each handle all that information: how quickly they index it, and how powerful/accurate are their search capabilities.

Mixed results so far. I eliminated ISYS:desktop early on, because IMHO -- while it offers the most comprehensive search capabilities of all -- I found it rather complex than all the others to install, configure, and use for searching. But the real clincher for me was that ISYS:desktop is quite expensive (hundereds of dollars).

I trialled X1 Desktop Search but uninstalled it when the two-week trial period expired -- not because it doesn't work well, but because it isn't free (although not exorbitantly priced).

The other products that I'm evaluating are free, and they are: Microsoft's Windows Desktop Search (which recently became entangled with MSN Toolbar), blinkx and Copernic Desktop Search. Each has its advantages and disadvantages in terms of usability, search features, performance, indexing space consumed, reliability, customization and so on. I'll probably publish a comparison when I finish my tests (which won't be for some weeks or months, mainly because the indexing rate is laboriously slow).

[UPDATE - 25 August 2005] ... I see that Google Desktop Search Version 2 (Beta) has just been released, so I'll probably give that a try too.

My gut feel at this early stage is that I'll never really be able to manage the information that I already have and what I'll be adding to it as time goes on. Using one of the above products, or something else, it will always be a struggle to retrieve the information I need at the time I need it -- although, following Murphy's Law, I often come across the information after I need it! Then there's the question of backup and recovery for all those gigabytes of data, which is such a huge topic that I will avoid it here.

And all of this relates to my tiny personal computing world. Expand the above to the information requirements of a large enterprise, multiple enterprises, the worldwide community, and the implications are mind-blowing. All this is illuminated by the article Pack-rat Approach to Data Storage is Drowning IT which makes you wonder where it will all end.

- - - - - - - - - - - - - - - - - - - - - - -

Apologies to Douglas Adams, who indicated that the answer is 42 but I'm not convinced that it is! (Also see H2G2.)

Wednesday, August 17, 2005

What's in a name?

I look out for sites and blogs that are a bit "different" with probing, in-depth discussions of theory and principle plus well thought out tips, techniques and working examples.

Here are two such blogs that I'd recommend to you:

The first such blog for today is IdoNotes (and sleep) by Chris Miller. He does Notes, he says, and in St Charles, Missouri ... and he has a Google Maps link to prove it. Lotusphere presentations and all sorts of other goodies, find there you will.

The second blog is that of Chris Doig and it too has some thought-provoking topics, such as:

- When is a name not a name? - placing user names in name fields in an application, and the trouble you can get into if you don't do this.

- Thoughts on Managing In-House Software Development Projects - running successful projects in as short a time as possible, using as few resources as possible.

- Using Version Numbers to Manage Database Designs - For any given Notes database, how you do know which version of the design is in production?

Incidentally, the last item about database version numbers reminds me to point out a highly relevant IBM developerWorks article: Create your own Lotus Notes template storage database with revision history - Keep track of your Lotus Notes/Domino database templates with the handy Template Warehouse. This article describes how to create the Template Warehouse, and includes a complete working example you can use at your own site.

Monday, August 15, 2005

Mainframes - the "dinosaurs" are thriving

Because I was a high school science teacher at the time, I wasn't aware that in 1964 IBM announced the epoch-making System/360 (architected to span the full 360 degrees of the scientific and commercial computing spectrum). Read about the IBM S/360 at Wikipedia and in the IBM archives. Also the quintessential paper Architecture of the IBM System/360. And there's also In the beginning there was IBM as well as The Beginning of I.T. Civilization – IBM’s System/360 Mainframe. A related must-read is Fred Brooks' 1975 classic, released in a 20th anniversary edition: Mythical Man-Month (replete with software engineering insights just as pertinent today as they were back in the 1970s: why, oh why, can't we learn from history?).

I joined IBM Australia in 1970, just in time for the next generation IBM System/370 announcement -- a new generation of hardware and IBM's first large-scale commercial implememtation of virtual storage. (The earlier IBM S/360 Model 67 had a more or less experimental implementation of this, I seem to recall, in its CP/67 operating system.) I was fortunate to have had such an early exposure to IBM mainframes, long a mainstay of organizations all around the globe.

From around the mid-1970s I moved away from the mainframes to some of their mid-range systems (then called "small systems" because minicomputers and personal computers were still a little way off in the future). Firstly the IBM System/7, where I spent a year doing intricate Assembler Language programming on a suite of IBM field-developed programs (FDPs) called PIMS, used to monitor and control Plastic Injection Molding Systems. That sort of work (writing interrupt handlers, etc) was like working on an operating system. It was a most interesting year, but had nothing at all to do with the rest of my career at IBM!

Then I switched to the IBM System/3, announced in late 1969 by IBM development laboratory in Rochester, Minnesota. This lab released a range of other small, easy-to-use systems during the 1970s: the System/32, the System/34 and the System/36, all extremely popular in small organizations and departments or subsidiaries of large ones.

While these systems were being produced, starting around 1972/73 IBM Rochester was working on a radical new system architecture and this was announced in October 1978 as the IBM System/38. The Wikipedia entry for S/38 which puts it very well:

"System/38 and its descendants are unique in being the only existing commercial capability architecture computers. The earlier Plessey 250 was the only other computer with capability architecture ever sold commercially. Additionally, the System/38 and its descendants are the only commercial computers ever to use a machine interface architecture to isolate the application software and most of the operating system from hardware dependencies, including such details as address size and register size. The System/38 also has the distinction of being the first commercially available IBM server to have a RDBMS."I could go on at length about other outstanding aspects of S/38, such as its implementation of "single level storage", and the low-level implementation of database journaling and commitment control, but that's for another time. While the PC world is trumpeting the arrival of 64-bit hardware and operating systems. the S/38 and As/400 and iSeries have had such things (in one form or another) for many years -- and, most importantly, users' applications didn't have to be rewritten or recompiled to take best advantage of new features!

I was heavily involved with supporting, in Australia and Asia/Pacific countries, the System/38 and its successor the AS/400. I took an early retirement option 1992, but this system family has remained my favorite. It's heartening to see the IBM iSeries carrying on the IBM Rochester tradition of highly-advanced yet affordable, reliable, easy-to-use systems in this new century, over thirty years since its architecture was conceived in the early 1970s.

Getting back to the IBM mainframe -- even if you term them "dinosaurs" after more than forty years of evolution they are sleek, powerful, modern beasts with plenty of life left and apparently a resurgence in popularity. The IBM Redbook referred to earlier shows how advanced the zSeries servers are these days. (And you certainly could consider the larger iSeries models truly be powerful mainframes in their own right, despite their small-system heritage.)

I urge you to consider the arguments discussed in THE DINOSAUR MYTH - Why the mainframe is the cheapest solution for most organizations and Is the IBM mainframe a good consolidation platform? and Future of the IBM mainframe looks surprisingly good and The Mainframe is the answer to all your problems before you commit to large difficult-to-manage farms of small systems.

UPDATE: see also IBM unveils the new System z9 - The next evolution in mainframe computing platforms which talks about the crisis of complexity arising from trying to handle growth in application requirements just by adding more and more small servers. It quotes The Yankee Group who report that due to fragile datacenters "many enterprises are restricted in deploying innovative applications that could potentially create competitive advantage." The article mentions that "in a 32-way Parallel Sysplex® cluster, the z9-109 can perform 25 billion transactions a day, compared with 13 billion a day with z990s clustered in a 32-way Sysplex." Now that's some daily transaction rate, isn't it!

Codestore = Cool store!

- Sensible Web Navigation - a better way to set up view/navigator for the Web environment.

- Making Domino Behave Relationally, Part 1 and Part 2 - a nice way to have "related links" for a document that don't break if any of the underlying documents is deleted.

- Domino Rich Text In The Browser - a promising alternative to the Java Editor Applet for WYSIWYG editing of Rich Text in the Web browser environment.

Monday, August 08, 2005

Wattle - it's the annual goldrush Down Under!

It's the second half of winter down here in Victoria, in the temperate southeastern corner of Australia. We just had a week or more of pleasantly mild weather, due to northwesterly winds crossing the continent and remaining relatively warm.

But a few days ago a southwesterly change hit, bringing with it cold winds from down south in the tempestuous Great Southern Ocean, and perhaps ultimately from further south in the Antarctic. There's a forecast for light snowfalls this week in the hills ringing Melbourne. Thankfully it never snows down here in the suburbs: we get some frost, hail and sleet down here, but never snow. [UPDATE: The predicted snow did indeed arrive on Wednesday. But instead of being confined to the mountains it fell down to sea level on some of our southern ocean beaches. Snow fell in some of Melbourne's suburbs, but didn't manage to reach as far as downtown Melbourne. This was initially described as a "once in fifty year" event, then later as a "never before experienced" occurence. So much for global warming -- maybe it's global cooling instead. (?)]

The winter of 1987/1988 at the IBM Development Laboratory in Rochester, Minnesota (working on the IBM AS/400 before it was announced) gave me a taste of bitter, numbingly cold weather. Since that time I dropped my notion of Melbourne as being really cold, and now consider it to be just uncomfortably cool and a bit miserable in short bursts.

The reference to "goldrush" in the title? Well, despite those cold fronts marching regularly across the southern ocean, there's one thing that I find heartening and uplifting about this time every winter. It's the riot of gold that bursts out in August during the annual blooming of various species of Acacia tree -- see wattle and acacia and sclerophyll in Wikipedia. The gold stands out ever so distinctly against the background green of the leaves. (As an aside, in this southeastern corner of of Australia in the 19th century we had the Victorian gold rush.)

You can see why Australia chose green and gold for the colours of our national sports uniforms, as seen at the Olympic Games, etc.

Finally, here's a collection of royalty-free pictures that I snapped for you today (08/08/2005) just across the road at the nearby Wattle Park reserve: http://notestracker.com/wattlepark/

Wednesday, August 03, 2005

Now You See It, Now You Don't

I listed every type of design element for which this happens, up to and including the Domino Designer for ND7 Beta 3 (which was the latest release at that time), as follows:

- The Infobox remains closed (as it quit eproperly should) when you edit a Frameset, Page, Form, Outline, Subform, Script Library, Navigator, Database Icon, Help About and Help Using documents, Database Script.

- The Infobox inappropriately opens when you edit a View or Folder, Agent, Web Service, Shared Field, Shared Column, Shared Action, Image (maybe), File Resource (maybe), Applet (maybe), Style Sheet (maybe), and Data Connection (maybe). Here, the "maybes" mean that it may be appropriate for the box to open when you click on the item, since there is not underlying form to open for each of these.

You just add to your NOTES.INI file the following statement:

DesignNoInitialInfobox=1

And it works well. Relief at last! This should be the default setting, don't you agree?

Of course the question still remains, why do the Domino Designer planners/developers allow the Infobox open inconsistently. Another case of design by committee, I guess, or is it just lack of attention to fine detail?

Wednesday, July 27, 2005

Karen Kenworthy's Gems

Karen develops and offers as freeware for personal use a diverse range of extremely useful Power Tools (designed to run on Windows 95 and later versions).

As well as the tools themselves, you can (and should) enrol for her free newsletter which is not only quite entertaining but also erudite.

If you purchase a copy of her Power Tools on CD Karen also grants you a license to use them at work. I was impressed enough to purchase the CD -- which speaks volumes for the tools because it takes a lot to extract even a dollar or two from me!

For the programmers amongst you, she provides Visual Basic source code for each tool. And you're sure to be switched on by her clear and insightful discussions about a wide range of topics such as hashing algorithms (her Hasher tool) and ultra-high-precision arithmetic (her Calculator which defaults to a mere 10,000 digits precision but she says can be set much higher).

Whether you're a programmer or not, Karen's little beauties are something you shouldn't do without.

Monday, July 25, 2005

Installation Blues, Part 2 revisited - Eclipse setup screencam demonstration

There now appears to be a much clearer download pathway for the Eclipse SDK, compared with what I experienced some months ago that prompted me to my earlier posting on this subject.

I have just recorded a brief screencam that shows the entire process and how simple it can be. It demonstrates the downloading and setting up of the latest Eclipse build, which as of today is Eclipse 3.1.

The whole procedure takes only 5 to 10 minutes (the download being the slowest part).

Get the screencam from here: http://notestracker.com/eclipse/Eclipse_animations.htm

Bugs can be extremely expensive to fix

A CHALLENGE:

Are you willing to "stand under the bridge you designed"? ... Read the following article to find out the significance of this challenge:

Software Engineers Aren't Doing Enough To Really Create Error-Free Software

Sunday, July 24, 2005

Installation Blues, Part 2 - Readying Eclipse for use

Now what's the first thing you look for when you install (Windows) software these days? Surely it's got to be a setup program, and you look for one named setup.exe or install.exe or something similar. And if you're like me you immediately launch this setup program without bothering to read any documentation (since the true test of a good modern installer is that it makes setup a breeze).

Well, in the case of Eclipse you're in for a surprise. Getting Eclipse ready for use is rather different from what you're used to with all the other IDEs out there. (As a preamble, I'm assuming that you already have the suitable prerequisite JRE and JDK installed, as for any other Java IDE.)

The first thing you have to know is that setting up Eclipse V3 is not an "installation" in the usual sense of the term, so let me instead use the term "readying Eclipse". There's no conventional installer that churns around for while setting up directories and doing other mystical things and then finally telling you that Eclipse has been installed.

This difference can confuse both novices and seasoned developers. Nowhere (at least nowhere obvious, to my knowledge) is there a simple set of instructions for getting Eclipse ready for use. I haven't come across a README file in the Eclipse distributions which tells you about this. Nor did I find much about it in any of the Eclipse textbooks and a tutorials. This can be a stumbling block, and sure enough I stumbled!

In the absence of any simple README file, after considerable digging around I worked out that you only have to unzip the Eclipse build package into a folder and then just launch eclipse.exe from that folder. So I got to the point where the Eclipse workbench opened in all its glory, and things were looking good or so I thought.

But more frustration lay ahead. I couldn't discover any Java support (syntax checker, compiler, etc). What was going on here, says I to myself. Was it due to some Eclipse option that I hadn't activiated? I looked everywhere, but try as I might I couldn't even get my World program compiled and running.

As a last resort, I moved into Read The Manual mode! After a bit of research, I came across an intelligible description of the Eclipse plug-in architecture and installation procedures. It indicated that Java support (the "SDK") was just one of the available plug-in options. So I had to install the Java SDK did i? What a nuisance. So after reluctantly reading up on the Eclipse plug-in installation procedure (and stumbling a few more times), I managed to install the Java plug-in. Much to my relief, I could then compile and debug "Hello World".

Nevertheless, I wasn't a happy trooper. Why on earth did I have to go through all the rigmarole of learning the Eclipse plug-in architecture and separately install the Java plug-in? Why did neither the Eclipse online Help nor the eclipse.org web site explain in a friendly, clear and up-front fashion the simple steps needed to get Eclipse ready for use (just unzip the file into a folder and then run eclipse.exe from that folder)? Maybe they do explain it somewhere, but if so I yet to find it!

This initial experience with Eclipse did NOT make a good first impression on me, especially in comparison with the many other smooth IDE installations I have done over the years. What had I done that was so wrong, and what did I have to do to get a simple Java compile working?

After some further research and experimentation I finally discovered where I had gone astray: I had stumbled merely because I had selected the wrong file. I had downloaded the Eclipse Platform build file (for Eclipse 3.0, the one named "eclipse-platform-3.0-win32.zip").

One of the shortcomings of the Eclipse delivery process is the way that the builds are shipped. If you visti one of the Eclipse mirro sites (such as http://mirror.pacific.net.au/eclipse/eclipse/downloads/drops/ here Down Under, in Melbourne) you are presented with an an overwhelming list of builds. And when you open one of the individual builds (such as http://mirror.pacific.net.au/eclipse/eclipse/downloads/drops/R-3.0-200406251208/) there's a long list of build files to choose from. It's pretty obvious that some are for WIndows, others for Linux or Solaris, and so on. But what's not obvious to the novice is that the appealing-looking "platform" variant is NOT the build file that you should be interested in (for Java).

What I didn't immediately realize was that when you unzip this "platform" file you get only a skeletal Eclipse IDE (a basic, language-neutral “platform”) and this base version does not include any language plug-ins at all. When I did come to realize this, the rest was easy.

What I should have downloaded was the Eclipse SDK build file (for Eclipse 3.0, the one named "eclipse-SDK-3.0-win32.zip"). As soon as I used this version everything went smoothly. This is the build file that has the desired Java support built in (there's no need to install the Java SDK plug-in separately). Lesson over, but what along and painful lesson! The pity is that it should have been plain sailing all the way, and I shouldn't have had to endure any storm-tossed seas!

So, brethren, let me put it pithily:

- Eclipse should come with a simple "READ ME FIRST" document that clearly explains the salient points from above so that you don't go astray like I did.

- If it's Java you want to use -- and most Eclipse users probably do, not the JDT or RCP or other specialist aspects of Eclipse -- then be sure to download the SDK file (not the base "platform" file, or the "JDT" file, or the "RCP" file, or any of the others in the drop).

Now it's over to you ... Can any of you provide similar tips for Eclipse (and post them here for the benefit of all)?

Saturday, July 23, 2005

Installation Blues, Part 1 - Installer Work Areas

Right after being launched, many installers need to unzip the distributed package into a temporary work area -- a folder on some drive or other -- before they can start on the installation proper. Some installers just forge ahead with a simple "no questions asked" approach, and (provided you have sufficient free disk space available) they usually do their job unobtrusively.

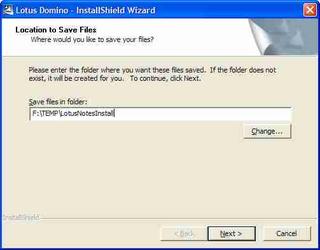

On the other hand, some installers want you to specify the location of the work area, which can be a good thing if the installer needs lots of disk space (some require hundreds of megabytes). Here's a typical dialog (click the image to view an enlargement):

It prompts you for "Location to save files. Where would you like to save your files?". When I first encountered this prompt I thought that it meant the location for the installed product, but it doesn't mean this at all. What it's really asking for is the installer work area location. Only the folder name "TEMP\LotusNotesInstall" gives you an indication of this.

It's a matter of the wording used to get the message across. Technical people often are not good with words! Also, things like this are often overlooked when focus is on the product technical plus sales/marketing documentation and not enough care is taken with installation documentation. Slip-ups like the above really ought to be filtered out during usability testing and quality control stages, shouldn't they? (I know, I know, it's hard work and by most it's not regarded as a glamorous aspect of software delivery -- but it's well worth the effort.)

Here's a superior example (again, click the image to view an enlargement):

The second example is a model of clarity. No more needs to be said. There's no room for misunderstanding, and compared to the first example you're put in control of the situation.

Do YOU have any similar examples to share, to assist my attempt to influence software vendors? If so, please post them here!

Friday, July 22, 2005

The "Do no harm" principle

That is, when a product is enhanced (a new hardware model, a new software version) it must retain ALL of the features and capabilities of the earlier model or version and they should operate the same way as before. No surprises! The new behavior must be identical -- or at least so similar to that of the old model/version -- that the user doesn't notice any significant change in behavior and is not slowed down or inconvenienced.

That is to say, the behavior of the new model/version must be a superset of the earlier behavior.

There's nothing more annoying or disruptive than when the new model/version misses out completely on an old feature or function that you need and depend on, or when some important feature/function no longer can be easily found, or when the feature/function works differently in the new version.

I was brought up (during my years at IBM) on mainframe and midrange operating systems. Generally IBM does an excellent job in ensuring that new products don't cause any grief. Nevertheless IBM makes the occasional slip-up. For example, during the early 1980s a key feature of the IBM System/38 Query Utility that users had come to love and depend on "went missing" in Query Release 3, leading to a whole lot of users being inconvenienced. )To IBM's credit, the feature was reinstated in the next release.)

Jumping forward a couple of decades: a second example is the morphing of Microsoft Windows 2000 (a.k.a. Windows NT 5.0) into Windows XP (a.k.a. Windows NT 5.1). Many useful enhancements came with Win XP, however a number of the old features and functions were invoked differently or worked somewhat differently. Nothing earth shattering, but a fairly significant learning curve was involved in adjusting to the new environment of Win XP. In some cases it seemed that Microsoft had made changes just for the sake of change. Actually, I like Windows XP a lot, and really miss its improvements when occasionally I have had to revert to a client's Windows 2000 system. I won't be at all surprised if a another big dose of transition pain lies ahead of us, when Microsoft delivers its successors: Vista/Longhorn.

(UPDATE as at early February 2006: Microsoft has just released Internet Explorer 7 Beta 2 for public preview. I've been giving IE7 Beta2 quite a workout over the last few days, and while it has some nice new features, its user interface and behaviour are rather different from those of IE6. Some things work differently, some things have just disappeared, and some old features seem to be "broken".)

This leads me to the point of this posting. It's about one product, but similar things could apply to just about any products. As (amongst other things) a software developer myself, I'm just trying to make a point and not be negatively critical: here goes, anyway ...

It relates to Eudora, Qualcomm's very nice e-mail client. It's an example of less-than-satisfactory customer service and support, and you dear reader will surely have had similar experiences or even far worse ones! From my own experiences I know how complex software development can be and how extremely vigilant and consistent you must be at all times. I chose this example not to lambaste Qualcomm in particular but because it's a contemporary example of a company spoiling a product experience by making a new version not work the same way as before.

I started using Eudora in 1993 or thereabouts (Version 3.x), and saw no good reason to change over to Microsoft Outlook when Windows 95 arrived a couple of years later, still to this day seeing no compelling reason to so so. Eudora has some excellent features, fits my work patterns well, and has had numerous functional and usability enhancements over the years.

But for me there has been one major slip-up in Eudora. I was pretty happy with Eudora up to and including Version 6.1.0.6, but my happiness evaporated when in 2004 it was superseded by Version 6.1.2 with one behavioural change that was only a minor one but nevertheless had the effect of causing me continuous pain and anguish, and caused me to be quite discontented with Qualcomm.

Why so? It's because a minor feature that worked unobtrusively up to and including Version 6.1.0.6 was changed and behaves differently in all subsequent versions. There was no way to switch off the new behavior. For my own comfort I decided to revert to (in other words, to stay locked in to) the the old 6.1.0.6 version.

I receive dozens of incoming mail message s daily that are in newsletter format, with each one containing multiple embedded URL links. Up to an including V6.1.0.6 there was a very handy Eudora feature that I came to rely on heavily: you could right-click inside an open mail item and select "Send To Browser" which caused the entire message to be sent directly [without any intervention] to a window in your Web browser, from which it was more convenient to follow those multiple URL links.

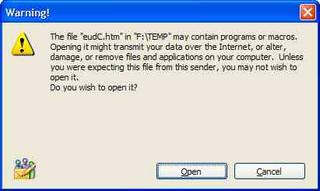

But with Eudora Version 6.1.2 (and later), an undocumented and annoying new behavious surfaced: when you right-clicked inside a mail item and selected "Send To Browser" you now got an in-your-face warning:

Stupidly, this dialog could not be switched off. Only by staying back-level (at Version 6.1.0.6 or earlier) could you avoid the major inconvenience of dismissing this alert box by having to click on the "Open" button each and every time.

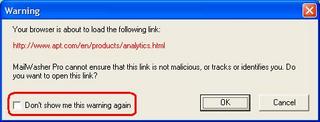

An exactly parallel situation is properly handled by Firetust's excellent MaiWasher (upon which I depend heavily, to review and get rid if all spam and other unwanted messages before allowing Eudora to read in the remaindert). This is shown by the "Don't show me this warning again" check-box option in the following image:

This could be easily be fixed by Qualcomm. My several attempts over a preiod of months to get them to revoke this unilaterally-changed behavior led nowhere. Like many software vendors unfortunately, Qualcomm has no effective mechanism for issues such as this to get to the attention of the people in their organization who could easily change it. I don't put any blame on their technical support staff, because it's their support structure/mechanism that's at fault (not the support staff, who have not been provided with the the means or opportunity to do any better).

Whenever any of my own customers asks for assistance/support concerning one of my products or services, I sit up and listen! Apart from replying quickly -- same day, if possible -- I strive to relentlessly pursue the matter to resolution, and then to regularly contact them to ensure that no residual issues linger on. Sadly, this does not seem by any measure to be the way that all support is provided. (In too many cases, no suport at all is provided.)

UPDATE (early November, 2005):

I've just tried out the new 7.0.0.10 Beta Version of Eudora, and to my disappointment find that it still has the same problem described above, which means that I'm still oocked into the old version for the moment. HOWEVER, perseverence counts. At last, after a year or so I was put through to the right person at Qualcomm in Eudora technical support. Thank you indeed, Jim Ybarra, for immediately confirming and appreciating my predicament, contacting product development and sending me feedback the very next day! (The issue went away in Eudora version 7.0.0.16 I think it was, and now I'm a happy camper.)

UPDATE (early March, 2006):

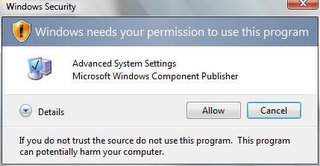

Based on the latest preview (February 2006 CTP, or Beta 2 Release 1) it seems that Microsoft is about to do the same nasty thing to us with WIndows Vista. Reportedly, when you launch a program that it doesn't trust it throws up the security challenge:

Most unfortunately there's no check box to allow Vista to "learn" that you consider this particular program to be safe and that you're prepared to do away with this challenge when you call up the same item again. If this is how Vista will finally ship, then it's iiintolerable and pathetic!

Thursday, July 21, 2005

Distracted by multitasking interrupts

Driven to distraction by technology - "The typical office worker is interrupted every three minutes by a phone call, e-mail, instant message or other distraction. The problem is that it takes about eight uninterrupted minutes for our brains to get into a really creative state. ... humans just aren't that good at doing many things at once. ... there are only certain types of tasks that humans are good at doing simultaneously. Cooking and talking on the phone go together fine, as does walking and chewing gum (for most people). But try and do three math problems at once, and you are sure to have a problem. ... The paradox of modern life is that multitasking is, in most cases, counterproductive."

More or less complemented by body language expert Allan Pease's and his wife Barbara's observations in their popular and entertaining book: Why Men Don't Listen & Women Can't Read Maps

Saturday, July 16, 2005

The Notes/Microsoft battle continues!

(This article in The Spectator was published Friday 8th July 2005 20:48 GMT)

"Microsoft is gunning for IBM's Lotus Notes users in an effort to quadruple the size of its ISV partner community around the Office desktop productivity suite.

The company will launch a sales and marketing campaign in September that encourages 100m Notes customers to build their future collaborative software applications and services on Office and SharePoint Portal Server.

Microsoft believes it can exploit what it perceives to be uncertainty and concern among users over the future of their platform caused by IBM's newer Workplace strategy."

- - - - - - - - - - - - - - - - - - - -

Seeing is believing ...

Friday, July 15, 2005

To blog, perchance to RSS

In order to better understand the nature of feeds, and how they are best used, I have experimented with a range of popular stand-alone RSS aggregators such as RSS Bandit. I've a preference for the more convenient RSS feeds directly built into some Internet Explorer add-ons such as the excellent Avant Browser that I use heavily, and also built into the Mozilla Firefox browser. (Not being biased, I swap merrily between these tools depending on what I'm trying to achieve from task to task and from moment to moment.)

I've also researched a range of web sites for info about generating RSS feeds. Most of these sites (IBM developerWorks, to name one) focus exclusively on RSS aggregators and not RSS generators.

I just discovered one RSS-centric site that seems more comprehensive than the rest, and recommend it to you. Visit RSS Specifications and here's their section about creating RSS feeds

I have mixed feelings about the value of RSS feeds. Some of them are poorly set up so making them a little tricky to include in your aggregator. Then again there's the issue that it can easily become quite a chore to keep up with the feeds, and also it's debatable whether much of their content is worth reading in the first place -- which I'd label GOGI ("Garbage Out, Garbage In").

Further, there's The Myth of RSS Compatability (spelling: "compatibility"?) plus a range of other issues raised in Dylan Greene's interesting blog article 10 reasons why RSS is not ready for prime time

Thursday, July 14, 2005

What is IBM Workplace?

I heartily recommend you take a peek at a new IBM Redbook that's just come out: Deploying IBM Workplace Collaboration Services on the IBM iSeries Server

Forget the fact that it's an iSeries-focused book (coming from the IBM development lab in Rochester, Minnesota). There's a nice overview in the first chapter that concisely describes:

- What is IBM Workplace

- What is IBM Workplace Collaboration Services

- Workplace Collaboration Services 2.5 products

- What is IBM Workplace Services Express

Driving Miss Notesy?

http://www.asiapac.com.au/WeDriveNotesFurther.htm

Some patterns for IT architects

For example, some key links for two of the biggest players:

- IBM Redbooks Patterns for e-Business series

- IBM developerWorks - SOA and Web services

- IBM developerWorks - Web architecture

- IBM Systems Journal

- IBM Journal of Research and Development

- Microsoft Architecture Center

- Microsoft Architecture Journal

- Microsoft Systems Journal (1994 - 2000)

- MSDN Magazine (March 2000 onwards)

Good old reliable IBM

When I recently came across the IBM Communication Controller Migration Guide fond memories came flooding back of numerous IBM hardware and software networking products. Things like SNA, SDLC (this stands for "Synchronous Data Link Communications", not "Software Development Life Cycle" ), VTAM and NCP, APPC (a.k.a LU 6.2), APPN, and many other fantastic networking products.

Five years into the 21st century, some things have changed significantly. You hardly ever hear of SNA any more, but the excellent heritage remains. The synopsis of this Redbook puts it elegantly:

- - - - - - - - - -

"IBM communication controllers have reliably carried the bulk of the world's business traffic for more than 25 years. Over the years, IBM controllers have been enhanced to the point that the functional capabilities of the current products, the 3745 Communication Controller and the 3746 Nways Multiprotocol Controller , surpass the capabilities of any other data networking equipment ever developed. Beyond the SNA architecture PU Type 4, beyond APPN, even beyond IP routing, these controllers support an extraordinary set of functions and protocols. Because of their long history and their functional richness, IBM controllers continue to play a critical role in the networks of most of the largest companies in the world.

Over the past decade, however, focus has shifted from SNA networks and applications to TCP/IP and Internet technologies. In some cases, SNA application traffic now runs over IP-based networks using technologies such as TN3270 and Data Link Switching (DLSw). In other cases, applications have been changed, or business processes reengineered, using TCP/IP rather than SNA. Consequently, for some organizations, the network traffic that traverses IBM communication controllers has declined to the point where it is in the organization’s best interest to find functional alternatives for the remaining uses of their controllers so they can consolidate and possibly eliminate controllers from their environments.

This IBM Redbook provides you with a starting point to help in your efforts to optimize your communication controller environment, whether simply consolidating them or migrating from them altogether. We discuss alternative means to provide the communication controller functions that you use or ways to eliminate the need for those functions outright. Where multiple options exist, we discuss the relative advantages and disadvantages of each."

- - - - - - - - - -

So download your copy of this Redbook today and learn something about this mainstay range of networking products.

IBM Redbooks are developed and published by IBM's International Technical Support Organization, the ITSO. All are available as free PDF downloads, and you can register on the mailing list for the Redbooks weekly newsletter and of course there are RSS feeds for IBM Redbooks.

Monday, July 04, 2005

Secret Agent? ... Software Sucks!

By the way, it's not just Lotus Notes that's bugging me -- in fact, Notes is one of my very favorite bits of software! So to be fair to IBM Lotus Software I'll add some posts about other stupid designs/implementations and will put all software developers under notice (including myself).

Today's little gem is "The specified agent does not exist" that appeared out of the blue when I was testing a Web app.

Well now, Mr Notes, why on earth could you not find this "specified agent"? You obviously knew its name, so why not share it with us? That way, we should know just where to start looking for this secret agent, and more rapidly fix the app!

In this case, there was no major problem but merely a misspelling of the agent's name. It was entered in the code stream as: NotesTrackerWebQueryOpen

It should have been entered as: (NotesTrackerWebQueryOpen)

So why didn't the error message come out something like this ...

The specified agent does not exist:

NotesTrackerWebQueryOpen

An agent with the following name does not exist in database travelrequests.nsf: NotesTrackerWebQueryOpen

- - - - - - - - - - - - - - - - - - - - - - - - - - - - - - - -

It's not just Lotus Notes that has some really awful functionality and usability shortcomings. To a greater or lesser extent, ALL software products have bugs, usability issues, points of silliness and quirkiness. It's up to the product developer to fix bugs and enhance usability with each new release, not just to pile on new features while leaving the old issues unresolved.

There are books (even series of books) and Web sites dedicated to pointing out product weaknesses, inconsistencies and absurdities. The better of them adopt a positive attitude by giving workarounds and usage tips. One of these is the classic Windows Annoyances.

The Lotus Notes Sucks site tends to give the impression that Notes is quite bad, which simply is not the case! But Notes certainly could do with a careful clean-up/workover in some ways (such as silly/misleading error messages). There are some postings by Dennis Howlett and Damien Katz along these lines, with comments adding a broad range of opinions about the matter.

Friday, June 24, 2005

Notes Error - Cannot locate field

It has reinforced to me the enormously valuable functionality and flexibility of the basic Lotus Notes platform. Many of the features that having been available since Notes Release 3 or earlier make it ever so easy to develop really powerful workflow and collaboration applications in a flash (compared with most other application development products). It must never be forgotten that the "vintage" capabilities of Notes are still world-beating, while having been augmented by all of the other major features added over recent years.

HOWEVER ...

As with other development environments that haves been around for a while -- and Notes' 15 years is quite ancient compared with some other products -- there are parts of the Notes product that still need some cobwebs cleaned away.

I'm using Domino Designer 6.5.4, and was surprised to see another stupid error message pop up: "Notes Error - Cannot locate field" when I went to open a document. What an inane error message. (You'll find a similar comment in my earlier post: "Note Item not found".) Where were the Lotus quality controllers when this little beast escaped?

If Notes was looking [by name] for a the presence of particular field but couldn't find it, why on earth doesn't the error message clearly state the name of this field thus helping the developer to more quckly locate fix the problem in the code?

As it stands, with the message in its present form you have to either guess which was the offending field or systematically search for it out of the tens or maybe hundereds of fields in the form (and perhaps any subforms that are embedded in it).

What will IBM do about this? Well, I see that they're at last firmly reassuring everybody that the "old" Lotus Notes capabilities will last into the foreseeable future.) See "Hannover": The next release of IBM Lotus for the IBM announcement.) If there's still lots of life in Notes, then surely it's very worthwhile for IBM Lotus Software to go about ridding Notes of little "'nasties" like this one and not just focus on adding more goodies.

Wednesday, June 22, 2005

High-tech suffers from terminal seriousness

How about you? Are you taking your IT career too seriously? Do you ever introduce any humor into your presentations, reports, web pages, calls, web pages, blogs ... Or are all your work interactions life terminally tedious and boring?

Variety -- and humor -- are the spice of life!

Tuesday, June 21, 2005

Removing Comments from Software (a valuable tip)

I have been enjoying my grandson's first birthday, and also recovering from a 'flu shot (it's winter time here Down Under) and a major disk crash which eventually led me to spend several weeks rebuilding and reorganizing my main system. That's all out of the way, so now I can "get back in the blogging saddle" (with millions of blogs out there, I’m sure my absence wasn’t missed) ...

- - - - -

I've worked with many programming languages over the years (since the 1960s). I've never been a full-time developer -- because I've alwasy had lots of other duties -- but I still develop a fair bit of code as and when the need arises.

And I do try to write brief but useful comments as I go, and to change the comments as the code is maintained over time.

I just came across this valuable article at StickyMinds.com about an aspect of commenting code that is frequently overlooked: Write Sweet-Smelling Comments (by Mike Clark).

- - - - -

And still on the topic of the commenting software ...

I am one of theose "old timers" originally brought up on languages like FORTRAN and PL/I and BASIC (the original Dartmouth version, not the Visual Basic form) and COBOL and ASSEMBLER were in vogue. In those days, you wrote everything as source code "in the open" and placed your comments in that source code.

The source code was originally punched onto paper tape or cards or other inconvenient media types, you would often get only one overnight run to complile and test your program, and had to wait until the next day to wade a printout and see what had happened. Get a comma out of place, and you'd have to wait until the next night for another run. You learned to code extremely carefully and to be extremely patient! But at least all of your code was right there in front of you on the printed page, and not tucked away in obscure places.

Most assuredly I don't want to turn the clock back, but I do reckon that modern development environments in certain respects tend not to lend themselves to good coding practices including good commenting. I say this because there's usually a bunch of visual and other structures that are being developed that typically do not have any place for embedding detailed relevant comments. So maintaining modern applications can turn into a nightmare because there's no embedded commentary about the structure and behavious of these ancillary structures.

I know it from first-had experience with trying to maintain/improve such applications. Heck, I sometimes even have trouble understanding my own work after the passage of not too much time, because I had no decent way of embedding comments everywhere they were needed and so when I came back to it had trouble remembering all of the "nooks and crannies" that needed to be worked on (or at lthe very least checked again) to do proper maintenance.

Sometimes there would be design properties -- and even deeply embedded code -- lurking in dark and forgotten spots, sometimes only to be discovered by chance later on! (This might have been a non-issue if the client had been willing to pay for the many days or weeks of time needed to comprehensively document all of these things, but more often than not such detailed documentation is not budgeted for.)

Hence let me make a call to action for tools vendors all and sundry to build better commenting facilities into ALL design elements that form part of their application design and developmentproducts. This could be Lotus Notes, or Java, or .NET, or just about any other product from any vendor -- you name it!

Monday, April 11, 2005

An efficient way to Debug Domino Web Agents

Principal, Asia/Pacific Computer Services

The Intractability of Domino Web Agents

I've been programming since the mid 1960s, and never cease to be surprised how tricky debugging can be on some platforms, or in some environments on a particular platform. One such environment is the debugging of Web agents running on a Domino server. I know this is a sore point for most Domino developers.

Debugging of LotusScript for the Notes Client is pretty easy, since there's a nice debugger available (although the same can't be said for the Formula Language and Java environments, which is a shame.)

You put lots of loving care into developing your slick Web agent, then confidently launch it for the first time, and what do you see? Maybe nothing at all seems to happen. If you're lucky, you get a message or two in the Domino console log, but typically is so obscure as to be of no real use to you or on the surface seems to have no relationship to what your agent was trying to achieve!

I was in this situation a year or two ago, when extending NotesTracker, a database usage reporting toolkit (described at http://notestracker.com or http://asiapac.com.au ) from its originhal Notes-Client-only environment to embrace the Web browser environment.

The NotesTracker LotusScript code was quite intricate, and I had a few challenges to modify parts of it to get it working via WebQueryOpen and WebQuerySave agents. Additionally, this was some years ago, under Notes R4 and R5 so could only use the tools available at that time. The Remote Debugger capability didn't come along until Domino 6 (and even when it did become available, I found ND6 remote debugging too fiddly for my liking, not all that easy to set up and use).

Naturally enough, not wanting to "reinvent the wheel" I did the right thing by carrying out exhaustive research to see how others had gone about debugging Domino Web agents. I searched articles, forums and knowledge bases on the Lotus Web site, as well as quite a range of non-Lotus sites (nearly all of which latter you'll find conveniently listed at http://notestracker.com/Links/NotesDomino.htm ).

Firstly, refer to the following IBM developerWorks articles for some excellent general guidance about debugging Domino agents:

- http://www-10.lotus.com/ldd/today.nsf/lookup/DebuggingLotusScript_1

- http://www-10.lotus.com/ldd/today.nsf/lookup/DebugLS2

- http://www-10.lotus.com/ldd/today.nsf/lookup/ND6NewAgentFeatures

Prior to ND6, typical debugging methods suggested were to Include strategically-placed Print or MessageBox statements to display debugging information, or the more elegant use of the AgentLog class to write out the debug info into a log database.

But I was seeking a more direct method. So here's what I came up with. (I generally use it insteaad of the other techniques like inserting Print statements).

A brief outline of my technique follows. I suggest that you also watch the screencam demonstration at http://notestracker.com/tips/debug_web_agent.htm that I hope to make available soon.

Note that while I'm talking LotusScript in this article, the technique should also work for Domino Java Web agents and maybe even for Formula Language Web agents (although I haven't tested either of these). In fact, it might work in just about any release of any programming language on any platform (a wild claim, but we Aussies are not averse to making such assertions).

The Debugging Technique

Key steps in locating the coding problem in your Web agent:

- Develop a single, simple LotusScript statement that will cause the agent to throw a specific, easily-recognized error message at the Domino console. (This, as usual, will incorporate your Web agent’s name, which will distinguish your agent from all the other console message sources.)

It helps if you have handy access to the Domino console. In fact I find it most convenient if you can run the Domino server, Domino Designer, Web browser (and Notes Client, for that matter) all on a single machine -- nothing could be handier than that. - Place the debug statement at a strategic point in the agent’s code stream, then trigger the agent and

watch for a specific termination error message at the console. You now know that the Web agent has successfully reached exactly that particular point in the code stream before strinking your deliberate error trap. - Now cut-and-paste (not copy-and-paste) the debug statement to another strategic point further on

in the agent’s code stream. Then go back to Step 2, running the Web agent again. - Iterate through the above two steps until eventually a point is reached in your agent’s code stream where either

(a) there is an error message sent to the Domino console that is caused by something in the agent's code stream that is other than your deliberate debug statement, or

(b) the agent terminates without that error message. In the latter case you know that the faulty code must lie between that statement and the end of the code stream.

What do I mean by “strategic points” in the code stream? Each such point is simply a statement that represents a major logical step in either the agent's mainstream or in one of its underlying subroutines and functions.

I strongly suggest that you utilise a binary search approach to decide where such points are in the agent's code stream. (I don't want to teach you to "suck eggs" -- but if you're not familiar with this approach you can find out about it at a site like http://www.nist.gov/dads/HTML/binarySearch.html or by doing a Google search on "binary search".)

Focus first on the agent's mainline because the error might lie in the mainline itself. If you don't locate the error in the mainline, place the debug statement one-at-a-time at the very start of each successive subroutine (or function), and move on in the “binary search” fashion within the subroutine until you’re sure that the fault does not lie in

that subroutine, then move on to the next subroutine. This methodical approach is far more efficient than randomly picking points to place the statement.

My choice was to deliberately force a Zerodivide error to generate the Domino Console errors.

Naturally, in a mathematical operation, there cannot be an attempt to divide by zero, and the LotusScript compiler is smart enough to disallow statements that it is able to predict will cause division by zero, such as:

z% = 1 / 0 or z% = 1 / ( 1 – 1 )

To get around this issue, I use a statement of this general form:

zerodivide% = 1 / ( zerodivide% - zerodivide% )

The % character forces the field to be an integer, Leave it off, then the field becomes a LotusScript variant and the zerodivide technique fails.

In practise, I use this terse form:

zd% = 1 / (zd% - zd% )

or simply: z% = 1 / ( z% - z% )

And in all but simple agents I use the following variation:

z% = 1 / ( z% - z% ) ‘ ###########################

where the string of hash symbols (#) is added to be an "eye-catcher". This helps to make your debug statement stand out in your agent's code stream, enabling you to locate the statement much more easily when you want to cut-and-paste it elsewhere. Likewise it also helps you not to overlook it (and so leave it in the agent's code stream) when you've finished debugging and need to remove it entirely.

As mentioned earlier, I try to run the Domino test server on the same workstation that I'm using for the Domino Designer and Web browser (nice, but not always achievable). This way, I can see the Zerodivide error message the very instant that it appears on the Domino Console, a fraction of a second after opening the affected page in the Web browser. (You definitely should view the screencam to see what I mean by this.) The next best thing is

to run a remote Domino Console on your workstation, or to have the Domino server system very close by. This arrangement can significantly improve your debugging turnaround time, compared with not having to use a remote Domino test server.

Summing Up

I'm not pretending that the above technique is "rocket science" but that was precisely my objective: to describe a straightforward and painless technique which enables you to quickly hone in on the whereabouts of that elusive coding problem in your Domino Web agent. Try it out and see if it works well for you too. Otherwise, if you have an even simpler approach, send us your feedback.